Asking the wrong questions of technology…

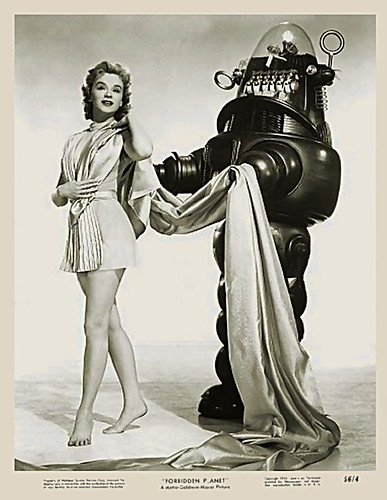

There has been a huge roll-out of technology with the advent of SBAC testing in California (and elsewhere in the country with CCSS testing in all forms). But is this really making education better? Is it making our kids better? Or is it like using Robbie shown above, good for little beyond holding the pretty lady’s train, and not the best use of public monies?

I know, I’m an ed tech enthusiast, but if this is the best we (and by we, I include me in that) can come up with…color me a Luddite and hand me some paper and pencils. I’m going to talk about what I think are stupid choices. Some of them are mine (which can be adjusted and improved on rather easily), and some are about how entire public education community and show little sign of improvement any time in the future.

In my last weekly reflection, I discussed how my kids did better writing with pencil and paper as compared to their online writing. Over the weeks, I would say their online writing is more generally better, but for my most fluent writers, they aren’t always quite as prolific and don’t always have as strong a voice in their online writing.

Some of the studies of reading and writing (in the form of note-taking) show paper and pencil to be more effective than computers and screens for the same task:

To Remember a Lecture Better, Take Notes by Hand – Robinson Meyer – The Atlantic

The Reading Brain in the Digital Age: The Science of Paper versus Screens – Scientific American

That second link has some caveats in it, and a really good question at the end:

Although many old and recent studies conclude that people understand what they read on paper more thoroughly than what they read on screens, the differences are often small…

Perhaps, then, any discrepancies in reading comprehension between paper and screens will shrink as people’s attitudes continue to change…

But why, one could ask, are we working so hard to make reading with new technologies like tablets and e-readers so similar to the experience of reading on the very ancient technology that is paper?

So I can see the mistake I’ve been making, I’m essentially transferring the writing that I used to have them do on paper, to a Wiki. Studies show improvements in students writing online happen with interaction, especially peer interaction, and multiple interactions. Now, I’ve only started having them write online this trimester, and I had a general plan to start with getting down the mechanics of writing there first, then start adding interactive writing. Now is that time, and I’ll share how it goes.

Now to a more general observation. These so-called new test are falling into just this this trap, and it risks making buying all these fancy new computers look like a huge waste. Look at the tasks that students are being asked to do on the sample test, they’ve essentially taken the same tasks that were on paper pencil tests to computer. All that’s changed is that tasks that are more cumbersome become a little less so on the computer. Here is a brief lists of the tasks (not comprehensive, but it covers most of what’s on the test):

- Standard multiple choice questions (yawn!)

- Questions with “checkbox” option, allowing multiple correct responses (really?)

- Short response writing

This is new for California, but New York has done this for years. It marginally improves the authenticity of the assessment, It’s like going from imitation non-dairy cheez-wiz , to the brand-name version. It’s still cheez-wiz. If it gets graded by “computers” (algorithms) I’ll be even less impressed. - Select the word/sentence/etc. that shows “something”

This is the big new thing, but really, every since I first saw this, I’ve been unimpressed. It’s not that much more authentic, or demanding, than giving students a list of multiple choice items with words, or underlining words/sentences with numbers and having them pick the significant one(s). This is the sort of thing guaranteed to impress adults, but is a huge yawn for students.

Think I’m being harsh? I generally ask students after these annual tests to comment generally on them. Was it harder, or easier, etc. One student summed it up well, “They weren’t that different than the old tests, we just used computers.”

The two big things that could change in future years’ testing are scoring by algorithm/machine, and the introduction of “computer adaptive” algorithms, adjusting the test so that it provides “just right” questions to students. Both those ideas are fraught with problems that have been covered earlier here, and here. Consider this too, if they don’t machine score the writing, then the “decisions” about level of difficulty made for each student will be based only on non-written answers.

Image Credit: 1956 – Forbidden Planet by James Vaughan, on Flickr